State of AI in enterprises

Enterprises are spending more on AI than ever, yet almost none of them are seeing returns. That gap — between investment and impact — is now the defining challenge of enterprise AI. The problem is not the technology. The models are capable, the infrastructure is maturing, and the use cases are real. What's missing is a reliable way to connect AI capabilities to the business problems that actually warrant them, and to embed those capabilities into workflows designed to use them. This paper examines why most enterprise AI initiatives stall, introduces a practical taxonomy for matching AI tools to business needs, and outlines a decision framework that moves organizations from experimentation to measurable value.

Framing the problem

Enterprises spent an estimated $37 billion on generative AI last year, 3.2 times YoY increase in $11.5 billion value they spent in 2024.[1] Additionally, the global survey on the state of AI reports that 88% of organizations now use AI in at least one business function, up from 78% of usage reported in 2024.[2] Simple analysis of the metrics reveal that by every measure of adoption, the technology has arrived.

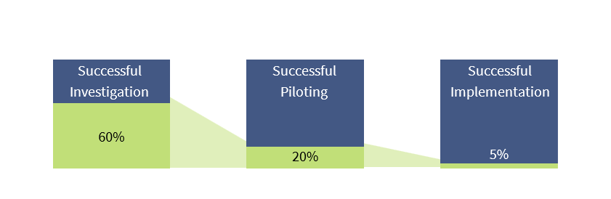

The results have not. In 2025, MIT researchers studied 300 enterprise AI deployments and found, despite the exponential investment in AI tools within companies, 95% produced no measurable financial return.[3] Only 5% of integrated pilots resulted in value creation. Leaders report sitting through dozens of vendor demos and yet are unable to find solutions that deliver to their existing needs within the organization. Goldman Sachs validated these experiences through their calculation showing hundreds of billions in U.S. AI infrastructure investment contributed "basically zero" to GDP growth in 2025.[4] Rightfully, this begs the question: is AI transformative to enterprises or is it a bubble waiting to explode?

Fig 1: Current state of successful AI adoption in enterprises

BCE’s AI Foundry believes that the issue is not whether AI can deliver value. The question, and where enterprises consistently fall behind, is what to adopt, where to deploy it, and how to move from pilot to enterprise-wide employment.

Why AI adoption in enterprises fail

Most enterprise AI initiatives fail for two reasons. The first is choosing the wrong tool for the job. Generative AI, the technology behind ChatGPT, Claude, etc., has dominated the AI adoption conversation, making it the frontier choice for deployment in organizations. However, it is important to understand that many business problems are better solved by AI systems that are specifically designed for prediction, pattern recognition, or process automation. When companies default to generative AI because it is the most visible option, they end up with an impressive demo that solves the wrong problem.

The second failure is deploying a tool without redesigning the work around it. MIT's research found that the vast majority of enterprise AI pilots stall not because the models underperform, but because they are dropped into existing workflows that were never built to accommodate them. Companies that are reporting on seeing real financial returns from AI are three times more likely to have redesigned their processes before deploying it. BCE AI Foundry believes that successful deployment of AI tools within enterprises starts with a strategic choice: matching the right tool to the right workflow.

Matching the right tool to the right workflow requires a shared understanding of how different AI capabilities connect to specific business processes. Most organizations do not have one. OpenAI's enterprise research found that current models can do far more than organizations have put into practice, not because the technology falls short but because companies lack a structured way to connect what AI can do to the work that would benefit from it.[5] Without a common way to map AI capabilities to business processes, enterprises end up evaluating tools they do not fully understand against workflows they have not clearly defined.

BCE AI Decision Taxonomy

BCE proposes a practical AI taxonomy as a shared enterprise language that improves decision speed and quality. Done well, it helps leadership teams answer three questions consistently:

- What capabilities do we need? Classify the problem before evaluating vendors. Prediction, perception, language understanding, language generation, and agentic orchestration are distinct capabilities with different reliability profiles, integration needs, and governance implications.

- What workflow building blocks are required to deliver value? AI creates value when embedded into accountable workflows with clear outcomes, business context integration, exception handling, and performance monitoring — not when deployed as isolated models.

- How do we right-size the solution? Bigger models are not inherently better models. A usable taxonomy makes trade-offs explicit, for example, contrasting large versus small language models across parameters, training data, infrastructure requirements, best-fit use cases, and expected benefits. Right-sizing means selecting the simplest model or system that satisfies latency, cost, privacy, and auditability constraints.

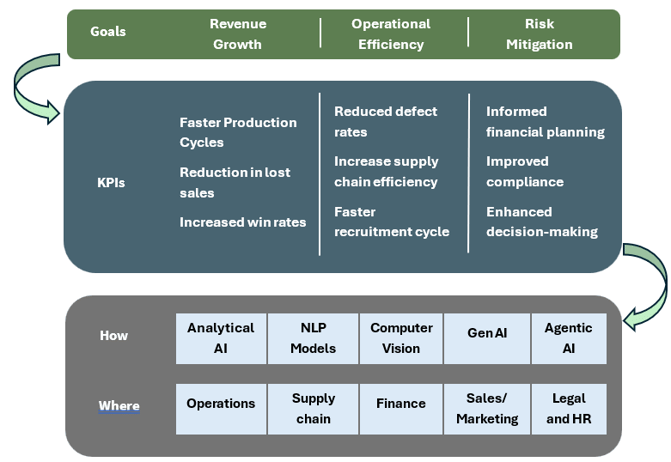

Fig 2: BCE AI Taxonomy’s outlining how enterprises can unlock the value of AI

BCE's AI Decision Framework gives leadership teams a structured way to connect strategic objectives to AI capability selection and deployment. It works top-down, starting with context and ending with execution.

Start with competitive context. Before selecting any AI capability, leaders need to understand how AI is reshaping their industry's economics. Where is it shifting cost structures? Where is it changing speed or scale? Where is it creating new barriers to entry? The goal is to identify where AI can strengthen what is already distinctive about the business, not simply automate what is already commoditized. Automation is the floor. The real value lies in AI that deepens a competitive advantage.

Anchor to measurable outcomes. Every AI initiative should tie to the goals leadership teams are already accountable for. Revenue growth measured through faster production cycles, reduced lost sales, and increased win rates. Operational efficiency measured through lower defect rates, better supply chain throughput, and faster hiring. Risk mitigation measured through improved compliance and more informed decision-making. These targets determine what qualifies as a viable use case and what does not.

Match the right type of AI to the right problem. Anchoring on the overarching goals and corresponding KPIs, the taxonomy decomposes AI into five core capability types: Analytical AI for prediction and optimization, NLP models for language understanding, Computer Vision for perception problems, Generative AI for language generation and synthesis, and Agentic AI for multi-step orchestration requiring autonomous reasoning and adaptive execution across systems. It then maps those capabilities to the business functions where they will be deployed. This is where repeatable workflows are identified, AI is deployed intentionally, productivity metrics are established, and strategic capacity is reclaimed.

This top-down structure directly prevents the two failure modes that derail most enterprise AI programs. Critically, the taxonomy is not a one-time exercise. A repeatable intelligence cycle, tracking competitor AI deployments, modeling how market shifts could reshape competitive position, and stress-testing current investments against likely disruption scenarios, keeps the taxonomy current and the strategy adaptive.

How BCE can help unlock the value of AI

Unlocking AI value now requires depth of understanding and a constant pulse on a shifting ecosystem. BCE’s approach is to maintain a living view of the opportunity landscape and the tool market, and to translate that into decisions clients can act on. In practice, that means tracking three moving targets: capability evolution (including model right-sizing), the vendor and application landscape by function and industry, and the governance and regulatory environment. With that market voice and a clear taxonomy, teams can define use cases precisely, pick the right capability class, and move from assessment to adoption through a staged execution path.

[1] Menlo Ventures. 2025. “2025: The State of Generative AI in the Enterprise | Menlo Ventures.” Menlo Ventures. December 9, 2025. https://menlovc.com/perspective/2025-the-state-of-generative-ai-in-the-enterprise/

[2] McKinsey & Company. 2025. “AI at Work but Not at Scale.” McKinsey & Company. December 10, 2025. https://www.mckinsey.com/featured-insights/week-in-charts/ai-at-work-but-not-at-scale

[3] Challapally, Aditya, Chris Pease, Ramesh Raskar, and Pradyumna Chari. 2025. “The GenAI Divide STATE of AI in BUSINESS 2025 MIT NANDA.” https://mlq.ai/media/quarterly_decks/v0.1_State_of_AI_in_Business_2025_Report.pdf

[4] Ovide, Shira. 2026. “This Economic Idea Transfixed Wall Street and Washington. It May Be a Mirage.” The Washington Post. February 23, 2026. https://www.washingtonpost.com/technology/2026/02/23/ai-economic-growth-gdp-mirage/

[5] OpenAI. 2026. “The State of Enterprise AI.” Openai.com. March 9, 2026. https://openai.com/business/guides-and-resources/the-state-of-enterprise-ai-2025-report/

- Menlo Park, CA

- Boston, MA 100 High St,

Boston, MA 02110 - Yarmouth, ME 121 Main St,

Yarmouth, ME 04096 - London, UK 30 Crown Place,

London, UK

.jpg?width=1200&name=AI%20in%20enterprises%20(1).jpg)